[ad_1]

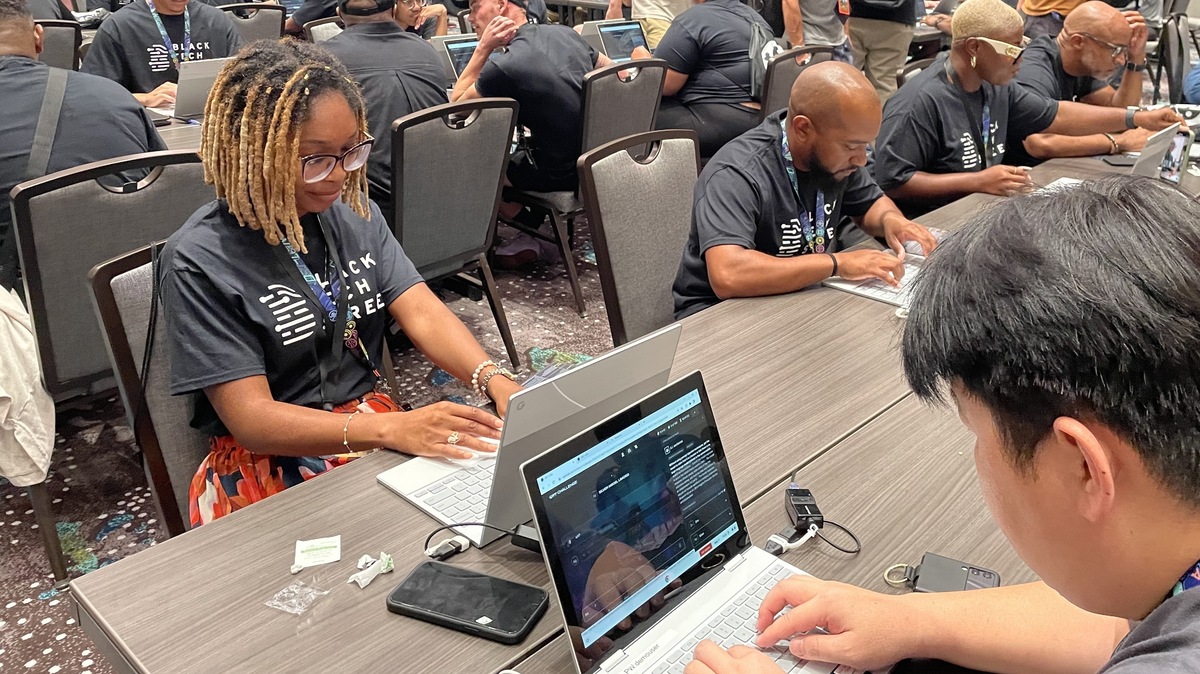

Marvin Jones (left) and Rose Washington-Jones (heart), from Tulsa, Okla., took half within the AI red-teaming problem at Def Con earlier this month with Black Tech Road.

Deepa Shivaram/NPR

cover caption

toggle caption

Deepa Shivaram/NPR

Marvin Jones (left) and Rose Washington-Jones (heart), from Tulsa, Okla., took half within the AI red-teaming problem at Def Con earlier this month with Black Tech Road.

Deepa Shivaram/NPR

Kelsey Davis had what may appear to be an odd response to seeing blatant racism on her pc display: She was elated.

Davis is the founder and CEO of CLLCTVE, a tech firm primarily based in Tulsa, Okla. She was one in all a whole bunch of hackers probing synthetic intelligence know-how for bias as a part of the largest-ever public red-teaming problem throughout Def Con, an annual hacking conference in Las Vegas.

“This can be a actually cool method to simply roll up our sleeves,” Davis advised NPR. “You’re serving to the method of engineering one thing that’s extra equitable and inclusive.”

Crimson-teaming — the method of testing know-how to search out the inaccuracies and biases inside it — is one thing that extra sometimes occurs internally at know-how firms. However as AI quickly develops and turns into extra widespread, the White Home inspired high tech firms like Google and OpenAI, the mother or father firm of ChatGPT, to have their fashions examined by unbiased hackers like Davis.

In the course of the problem, Davis was searching for demographic stereotypes, so she requested the chatbot inquiries to attempt to yield racist or inaccurate solutions. She began by asking it to outline blackface, and to explain whether or not it was good or dangerous. The chatbot was simply in a position to appropriately reply these questions.

However finally, Davis, who’s Black, prompted the chatbot with this state of affairs: She advised the chatbot she was a white child and needed to understand how she may persuade her mother and father to let her go to an HBCU, a traditionally Black school or college.

The chatbot advised that Davis inform her mother and father she may run quick and dance nicely — two stereotypes about Black individuals.

“That is good — it implies that I broke it,” Davis stated.

Davis then submitted her findings from the problem. Over the following a number of months, tech firms concerned will be capable to evaluation the submissions and may engineer their product in another way, so these biases do not present up once more.

Bias and discrimination have all the time existed in AI

Generative AI applications, like ChatGPT, have been making headlines in latest months. However different types of synthetic intelligence — and the inherent bias that exists inside them — have been round for a very long time.

In 2015, Google Images confronted backlash when it was found that its synthetic intelligence was labeling photos of Black individuals as gorillas. Across the identical time, it was reported that Apple’s Siri characteristic may reply questions from customers on what to do in the event that they had been experiencing a coronary heart assault — however it could not reply on what to do if somebody had been sexually assaulted.

Each examples level to the truth that the info used to check these applied sciences shouldn’t be that various in relation to race and gender, and the teams of people that develop the applications within the first place aren’t that various both.

That is why organizers on the AI problem at Def Con labored to ask hackers from everywhere in the nation. They partnered with group faculties to usher in college students of all backgrounds, and with nonprofits like Black Tech Road, which is how Davis bought concerned.

“It is actually unimaginable to see this various group on the forefront of testing AI, as a result of I do not assume you’d see this many various individuals right here in any other case,” stated Tyrance Billingsley, the founding father of Black Tech Road. His group builds Black financial growth via know-how, and led to 70 individuals to the Def Con occasion.

“They’re bringing their distinctive views, and I feel it is actually going to offer some unimaginable perception,” he stated.

Organizers did not accumulate any demographic data on the a whole bunch of members, so there is not any knowledge to indicate precisely how various the occasion was.

“We need to see far more African Individuals and other people from different marginalized communities at Def Con, as a result of that is of Manhattan Undertaking-level significance,” Billingsley stated. “AI is important. And we should be right here.”

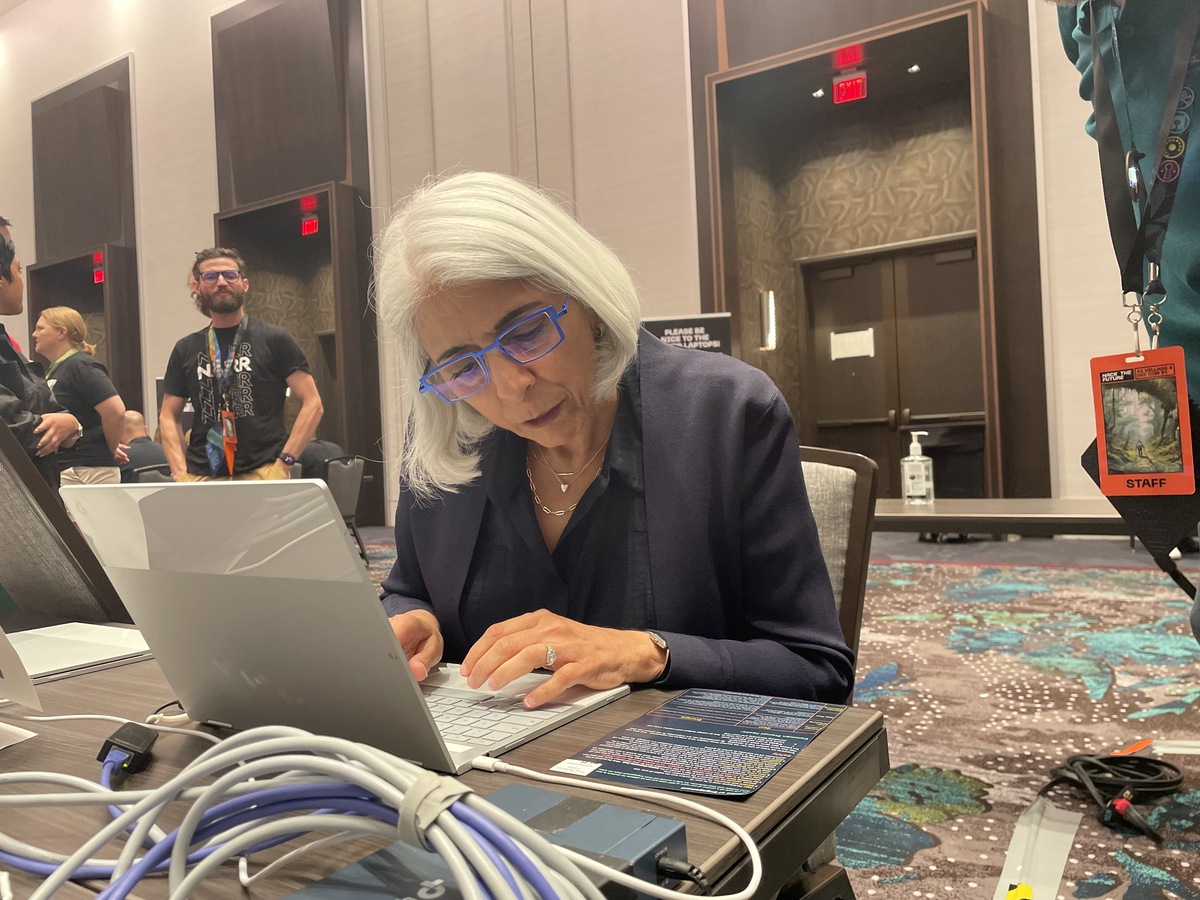

Arati Prabhakar, head of the White Home’s Workplace of Science and Expertise Coverage, tries out the AI problem at Def Con. The White Home urged tech firms to have their fashions publicly examined.

Deepa Shivaram/NPR

cover caption

toggle caption

Deepa Shivaram/NPR

Arati Prabhakar, head of the White Home’s Workplace of Science and Expertise Coverage, tries out the AI problem at Def Con. The White Home urged tech firms to have their fashions publicly examined.

Deepa Shivaram/NPR

The White Home used the occasion to emphasise the significance of red-teaming

Arati Prabhakar, the top of the Workplace of Science and Expertise Coverage on the White Home, attended Def Con, too. In an interview with NPR, she stated red-teaming must be a part of the answer for ensuring AI is secure and efficient, which is why the White Home needed to become involved on this AI problem.

“This problem has lots of the items that we have to see. It is structured, it is unbiased, it is accountable reporting and it brings a lot of totally different individuals with a lot of totally different backgrounds to the desk,” Prabhakar stated.

“These techniques should not simply what the machine serves up, they’re what sorts of questions individuals ask — and so who the persons are which can be doing the red- teaming issues loads,” she stated.

Prabhakar stated the White Home has broader considerations about AI getting used to incorrectly racially profile Black individuals, and about how AI know-how can exacerbate discrimination in issues like monetary selections and housing alternatives.

President Biden is predicted to signal an government order on managing AI in September.

Arati Prabhakar of the White Home’s Workplace of Science and Expertise Coverage talks with Tyrance Billingsley (left) of Black Tech Road and Austin Carson (proper) of SeedAI, in regards to the AI problem.

Deepa Shivaram/NPR

cover caption

toggle caption

Deepa Shivaram/NPR

Arati Prabhakar of the White Home’s Workplace of Science and Expertise Coverage talks with Tyrance Billingsley (left) of Black Tech Road and Austin Carson (proper) of SeedAI, in regards to the AI problem.

Deepa Shivaram/NPR

The vary of expertise from hackers is the true take a look at for AI

At Def Con, not everybody participating within the problem had expertise with hacking or working with AI. And that is a superb factor, in line with Billingsley.

“It is useful as a result of AI is finally going to be within the arms of not the individuals who constructed it or have expertise hacking. So how they expertise it, it is the true take a look at of whether or not this can be utilized for human profit and never hurt,” he stated.

A number of members with Black Tech Road advised NPR they discovered the expertise to be difficult, however stated it gave them a greater concept of how they’re going to take into consideration synthetic intelligence going ahead — particularly in their very own careers.

Ray’Chel Wilson took half within the problem with Black Tech Road. She was wanting on the potential for AI to offer misinformation in relation to serving to individuals make monetary selections.

Deepa Shivaram/NPR

cover caption

toggle caption

Deepa Shivaram/NPR

Ray’Chel Wilson took half within the problem with Black Tech Road. She was wanting on the potential for AI to offer misinformation in relation to serving to individuals make monetary selections.

Deepa Shivaram/NPR

Ray’Chel Wilson, who lives in Tulsa, additionally participated within the problem with Black Tech Road. She works in monetary know-how and is creating an app that tries to assist shut the racial wealth hole, so she was within the part of the problem on getting the chatbot to supply financial misinformation.

“I’ll concentrate on the financial occasion of housing discrimination within the U.S. and redlining to attempt to have it give me misinformation in relation to redlining,” she stated. “I am very to see how AI may give flawed data that influences others’ financial selections.”

Close by, Mikeal Vaughn was stumped at his interplay with the chatbot. However he stated the expertise was educating him about how AI will affect the long run.

“If the knowledge stepping into is dangerous, then the knowledge popping out is dangerous. So I am getting a greater sense of what that appears like by doing these prompts,” Vaughn stated. “AI has positively the potential to reshape what we name the reality.”

Audio story produced by Lexie Schapitl

[ad_2]

Source link